GPT-4 gets financial calculations wrong 71% of the time when it does not have access to a calculator tool. Even WITH a calculator, it still fails 14% of the time because it sets up the problems incorrectly. That is from a GitHub Next study that tested the model on financial calculations like mortgage math, rate of return, and weighted averages. The same type of arithmetic that goes into every CMA. Price per square foot. Percentage adjustments. Weighted comp analysis. And the best AI model in the world gets it wrong more often than it gets it right.

Sounds like a problem right. Because a lot of agents are typing “pull comps for 123 Main St” into ChatGPT and handing that output to sellers as if it means something.

I know because I have seen it. I have also spent the last year building a CMA system that does the opposite of what most people think AI does. So lets talk about why large language models fundamentally cannot do real estate math, what the actual liability is when they get it wrong, and what it looks like to build something that works.

AI Does Not Calculate. It Predicts What an Answer Should Look Like.

This is the part most agents (and honestly most tech people) do not understand about ChatGPT, Claude, Gemini, or any other large language model. These systems are not calculators. They are pattern matchers. They predict the next word in a sequence based on statistical patterns in their training data.

When you ask a language model “what is 47 times 83” it does not multiply. It looks at billions of examples of text where numbers appeared near each other and predicts what number is most likely to follow that particular pattern of tokens. Sometimes it gets it right. Sometimes it confidently tells you the answer is 3,891 when it should be 3,901. And it does both with the exact same level of confidence, which is the scary part.

That is fine when you are asking it to write an email or summarize a document. It is not fine when you are using it to determine what someone’s house is worth.

Researchers at Saarland University showed that when you pair a language model with a deterministic calculator module, arithmetic accuracy jumps to 99%. The calculator module outperformed models nearly 70 times its size at basic math. Not because the big models are bad at language. Because they were never designed to compute. They were designed to predict.

So when an agent asks ChatGPT to “analyze recent sales in 78738 and estimate the value of a 2,400 square foot home on half an acre,” here is what is actually happening. The model is not querying an MLS database. It is not calculating price per square foot from real transaction data. It is not running geographic proximity analysis. It is generating text that looks like what a CMA should look like, based on patterns it learned during training. The numbers might be plausible. They might even be close. But they are predictions, not computations. And “might be close” is not a standard I am comfortable with when someone is pricing their biggest asset.

How a CMA Actually Gets Built (The Manual Version)

Before I explain what I built, lets talk about what a CMA is supposed to be. Because most people (including some agents, sorry y’all) think it is just “finding comps.” It is not.

A proper comparative market analysis starts with pulling recent closed sales in the area. Not asking an AI to guess what sold recently. Actually querying MLS data for verified transactions. Then you filter by similarity. Square footage within a reasonable range. Same bedroom and bathroom count (or close). Similar lot size. Similar age. Same property type. You would not comp a 1960s ranch against a 2022 contemporary just because they happen to be on the same street right.

Then comes the math. Price per square foot for each comp. Adjustments for differences (the comp had a pool, yours does not, so you subtract the approximate value of a pool). You look at proximity and recency because a sale from 18 months ago two miles away tells you less than one from last month across the street. You remove statistical outliers. You sanity check against tax assessed values and against what is currently active on the market.

This is a defined, repeatable, deterministic process. Every step involves actual arithmetic on real numbers from real transactions. It is exactly the kind of work that should be done by scripts, not by a language model predicting what the answer probably looks like.

And this is what I noticed when I started building my system. The entire CMA process is a series of IF/THEN decisions and arithmetic operations. Find properties matching these criteria. Calculate this ratio. Weight by this factor. Filter by this threshold. There is nothing ambiguous about it. A computer should be able to do every single step perfectly, every single time. No cherry-picking comps because you want the listing. No “gut feel” adjustments. Just math.

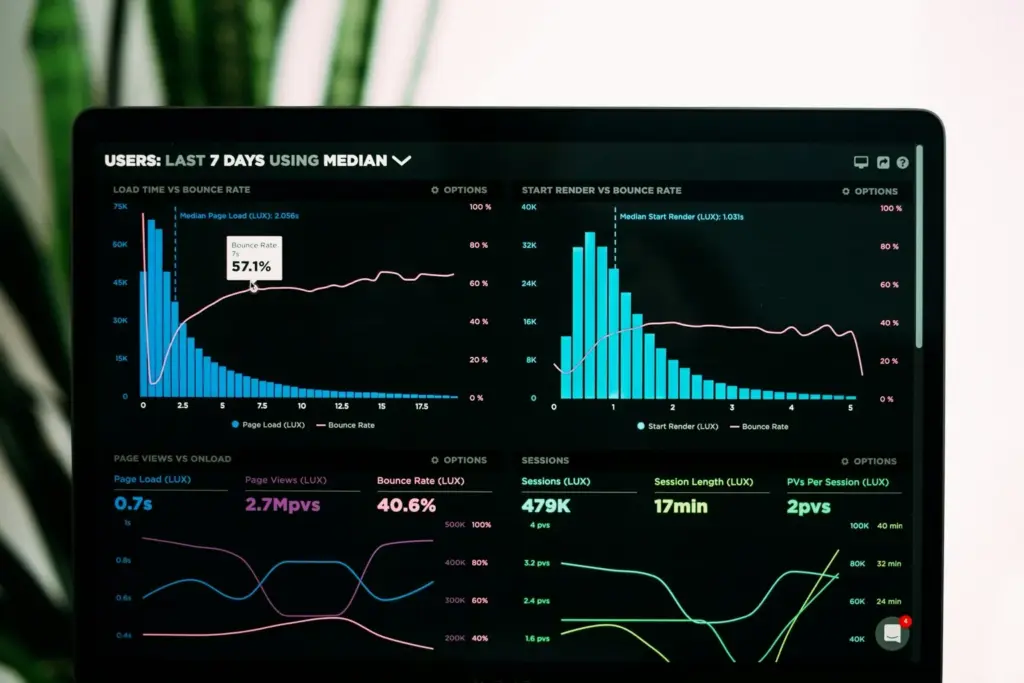

What I Built: Scripts Compute, AI Narrates

I have written about my AI-powered CMA system before, and about how accurate AI home valuations really are. But the thing I have not talked about explicitly until now is the architecture philosophy behind it. And it is embarrassingly simple.

Every number in the system is computed by deterministic code. Not predicted by AI.

The system queries real MLS closed sales data from a database of over 563,000 listings. It finds comps by geographic proximity using actual distance calculations. It filters by square footage, bedrooms, bathrooms, lot size, year built, and property type. It calculates price per square foot using actual division. Close price divided by square feet. Real arithmetic on real numbers.

It removes statistical outliers using standard deviation. It weights results across subdivision averages, school zone averages, and zip code averages. It runs sanity checks against tax assessed values and market bounds. It scores confidence based on how many good comps were available and how consistent the data is.

And then, only after every number has been verified by code that does the same thing every single time it runs, AI writes the summary paragraph.

That is it. That is the whole philosophy. The math is real. The narrative is AI. Nassim Taleb has this concept of “antifragility” where systems get stronger under stress. I would not call my system antifragile (I am not that grandiose about it), but the principle applies. The deterministic rules handle every comp the same way every time. No hallucinations. No creative interpretation of what 2,400 divided by 50,000 might equal.

I am not going to walk through the specific weights or formulas because that stays proprietary. But the principle is what matters here. If you are building any tool that touches real estate pricing, the math cannot be AI. Period.

This Is More Than Just a Prompt

And this is the distinction I keep coming back to. The difference between typing “pull comps for 123 Main St” into ChatGPT and running a property through a purpose-built system is the difference between guessing and knowing.

A prompt gives you a confident-sounding answer that might be completely made up. The model has no way to tell you “hey, I do not actually have access to MLS data, so I am just generating plausible-sounding numbers.” It will never caveat itself that way. It will produce something that looks exactly like a professional CMA, complete with addresses and dollar amounts and percentage adjustments, and none of it will be grounded in actual transaction data.

A tool gives you verified numbers from real transactions, computed with real arithmetic, checked against real market bounds.

I have talked to agents who genuinely believed ChatGPT was “pulling data” when they asked it for comps. It is not pulling anything. It is generating. Those are fundamentally different operations and mixing them up is how people get hurt financially. That is not hyperbole. Someone is going to price their house 0,000 too high because an AI said so, sit on the market for four months, and end up selling for less than they would have if they had just used real data from the start.

The Real Risk: Liability Is Not Theoretical

Ok so maybe you are thinking “sure the numbers might be off but how bad could it really be.” Lets talk about what happens when AI gets real estate facts wrong and someone relies on them.

The Realtor Association of Sarasota and Manatee (RASM) put out a warning in March 2026 about AI hallucinating property facts. Not vague concerns. Specific examples. AI generated listing descriptions that included features like “new roof” and “hurricane impact windows” that did not exist on the property. Their exact quote: “If it is in your MLS remarks, your flyer, your Facebook ad, or your website, you are responsible.”

That is not a hypothetical. That is a regulatory body telling agents they own whatever AI produces under their name.

And the legal precedent is building fast. Damien Charlotin’s database of AI hallucination cases in legal filings now tracks 1,275 court cases where AI-generated content contained fabricated information. Sanctions include 5,597 in a single case. These are attorneys who used AI for legal research and cited cases that did not exist. Now imagine the same dynamic playing out with property valuations that determine sale prices. The math errors are harder to catch because they look plausible. At least a made-up court case can be Googled. A made-up comp at a made-up price per square foot? That takes real market knowledge to spot.

The NAR Code of Ethics Article 2 says agents are responsible for the accuracy of all content they produce or cause to be produced, regardless of what tool generated it. “I had ChatGPT do it” is not a defense. Using AI does not transfer liability. It just adds a new and very creative way to be wrong.

And the CFPB approved a rule in 2024 specifically about AI and algorithms in home valuations. Their language was blunt: there is “no fancy technology exemption” for consumer protection laws. If your automated valuation model produces discriminatory or inaccurate results, the fact that an algorithm did it does not protect you. Full stop.

Even Purpose-Built Systems Get It Wrong

And here is the thing that really should give everyone pause.

Zillow. A company with some of the best data scientists in real estate. Proprietary access to more housing data than almost anyone on earth. They built a purpose-built automated valuation model. Not a ChatGPT prompt. An actual, engineered, enterprise-grade pricing algorithm with years of development and hundreds of millions of dollars behind it.

They used it to buy homes through their iBuying program, Zillow Offers.

They lost 81 million in a single year and laid off 2,000 people.

Zillow. With all their data. With all their engineers. With a system specifically designed to predict home values. Got it wrong badly enough to nearly sink a publicly traded company.

So when I hear agents say they are using ChatGPT to generate property valuations (a general-purpose language model that was not designed for math, does not have access to MLS data, and hallucinates freely), I do not know what to say. If Zillow’s custom-built pricing engine got it catastrophically wrong, what chance does a ChatGPT prompt have. I think the answer is zero but I am trying to be diplomatic about it.

That is not me being negative about AI. I use AI every single day and I have written extensively about how it transformed my business. But I use it for what it is good at. Language. Narrative. Pattern recognition in text. Summarizing complex information into clear communication. I do not use it for math. And neither should you.

What Brokerage Leaders Should Be Demanding

According to WAV Group’s survey of real estate leaders, 97% of brokerage leaders say their agents are already using AI. But 49% rate their concern about guardrails as 7 out of 10 or higher. Almost everyone is using it. Almost half are worried their people are using it wrong. That gap right there is the problem.

On the commercial side, it is even more stark. A Keyway and Commercial Observer survey found that 44% of investment committees actively distrust AI-generated analysis, and 41% specifically cite hallucinations as their top concern. These are sophisticated investors with fiduciary obligations telling you they do not trust it.

So the industry knows this is a problem. The question is what to do about it.

The answer is not to stop using AI. That ship has sailed and honestly it should have. AI is incredible. The answer is to build systems where the division of labor is clear. Scripts compute. AI narrates. Deterministic code handles every number, every calculation, every data lookup. AI handles the part it is actually good at, which is turning verified data into clear, readable communication.

If you are a managing broker, here is what I would be asking my vendors: “Show me where in your system the math happens. Is it computed by code or predicted by a language model? Can you guarantee the same inputs produce the same outputs every time? Where does AI enter the pipeline, and what does it touch?”

If they cannot answer those questions clearly, their tool is a prompt dressed up as a product.

And if your vendors cannot build it right, build it yourself. I did. It is not as scary as it sounds (ok it is a little scary but less scary than getting sued for a bad CMA). The hardest part is not the technology. The hardest part is understanding your own process well enough to codify it. Once you can describe exactly how you select comps, exactly how you weight adjustments, exactly how you determine a price range, a developer can turn that into deterministic code.

But you have to know your process first. You have to have done enough CMAs by hand to know what good looks like. The tortoise wins here too.

Where AI Actually Helps in Real Estate

I do not want this to come across as some anti-AI screed. That is not the point at all. I am probably one of the most aggressive AI adopters in residential real estate and I am not slowing down.

AI is incredible at things like writing listing descriptions from bullet points. Summarizing market reports into client-friendly language. Drafting email responses. Analyzing sentiment in client communications. Generating marketing copy. Processing and organizing large amounts of unstructured text. I even wrote about AI and compliance because I think agents need to understand both the power and the boundaries.

Notice what all those tasks have in common. They are language tasks. They are the thing language models were literally designed to do.

The problem only shows up when people ask a language tool to do a math job. And in real estate specifically, the math jobs are the ones with the biggest consequences. What is this house worth. How much equity does this seller have. What is the ROI on this investment property. What are the closing costs on this transaction. Those questions have definitive answers that real people make real financial decisions based on.

The right approach, and this is what I keep telling agents who ask me about it, is to use AI for what it is actually good at and build proper computational tools for everything else. That is what I did with the CMA system. That is what I think every brokerage should be doing for any tool that touches pricing, valuation, or financial analysis.

Frequently Asked Questions

Build Tools, Not Prompts

Here is the takeaway. AI is a narrator, not a calculator. It is exceptionally good at turning verified information into clear, compelling language. It is dangerously bad at producing the verified information in the first place.

If you are a seller wondering what your home is worth, work with someone who can show you where the numbers actually come from. Not someone who typed your address into a chatbot and printed what came back.

If you are an agent, start thinking about your CMA process as a system, not a task. Codify it. Automate the math. Let AI handle the writing. That is how you build something that scales without sacrificing accuracy.

And if you are a brokerage leader, demand transparency from every AI tool your agents use. Where does the math happen. Is it computed or predicted. Can you audit the inputs. If the vendor cannot answer those questions, find one who can.

At Neuhaus Realty Group, we built the tool because we were not willing to trust the most important number in real estate to a system that guesses. I have been doing this for 19 years. The math has to be real.

Want to see what a data-driven valuation looks like for your property? Lets talk. I will run your home through the system and walk you through exactly what the numbers say. No pitch, just data and an honest conversation about what your home is actually worth.

Be safe, be good, and be nice to people.

Need Strategic Guidance on Real Estate Technology?

Ed Neuhaus advises brokerage leadership, MLS organizations, and PropTech companies on AI strategy, data architecture, and technology decisions.